Summarize this article with:

In this article, we test several solutions for Optical Character Recognition (OCR). We test these solutions on a use cases: the recognition of information on a bank cheque (name, bank, amount, etc.). Enjoy your reading!

What is OCR?

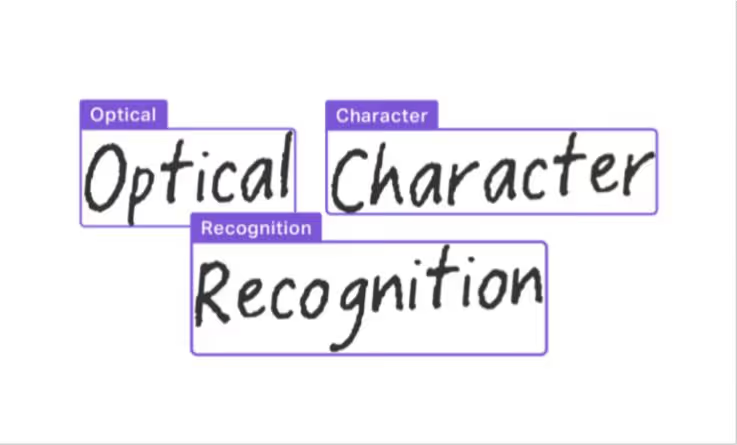

The rise of artificial intelligence in recent years has been driven by a phenomenon of digitalization that is omnipresent in all professional environments. This digital transformation has been initiated by most companies, large and small, and one of the main axes of transformation is the digitization of data. It is for this purpose that a computer vision service has been developed : Optical Character Recognition (OCR), commonly known as OCR.

The origin of OCR dates back to the 1950s, when David Shepard founded Intelligent Machines Research Corporation (IMRC), the world’s first supplier of OCR systems operated by private companies for converting printed messages into machine language for computer processing.

Today there is no longer a need for a system designed for a particular font. OCR services are intelligent, and OCR is even one of the most important branches of computer vision, and more generally of artificial intelligence. Thanks to OCR, it is possible to obtain a text file from many digital supports:

- PDF file

- PNG, JPG image containing writings

- Handwritten documents

The use of OCR for handwritten documents, images or PDF documents can concern companies in all fields and activities. Some companies may have a more critical need for OCR for character recognition on handwriting, combined with Natural Language Processing (NLP) : text analysis. For example, the banking industry uses OCR to approve cheques (details, signature, name, amount, etc.) or to verify credit cards (card number, name, expiration date, etc.). Many other business sectors make heavy use of OCR, such as health (scanning of patient records), police (license plate recognition) or customs (extraction of passport information), etc.

How does OCR work?

OCR technology consists of 3 steps:

- Image pre-processing stage, which consists of processing the image so that it can be exploited and optimized to recognize the characters. Pre-processing manipulations include: realignment, de-interference, binarization, line removal, zoning, word detection, script recognition, segmentation, normalization, etc.

- Extraction of the statistical properties of the image. This is the key step for locating and identifying the characters in the image, as well as their structures.

- Post-processing stage, which consists in reforming the image as it was before the analysis, by highlighting the “bounding boxes” (rectangles delimiting the text in the image) of the identified character sequences:

Who are the OCR suppliers?

During our study on OCR, we projected ourselves in the role of a company that wants to use OCR to respond to character recognition problems on PDF and image (JPG) media. We wish to obtain a high level of performance at a lower cost. So we asked ourselves the question: “Which vendor has the most suitable OCR solution for our use cases?”.

We have therefore chosen 4 OCR solution providers:

- Google Cloud Platform: Vision OCR API

- Microsoft Azure Cognitive Services: Computer Vision OCR

- Amazon Web Services: Amazon Textract

- OCR Space

We faced three huge players in the AI market and one smaller OCR provider.

What are the different OCR APIs?

OCR Space

It is possible to use OCR Space for free online, to “OCRize” an image. The following formats are supported: .jpg, .png, .webp, .pdf. A wide variety of OCR languages are also available. OCR Space offers the possibility to import the image from your local computer or from an Internet URL.

Other options are also available such as:

- Auto rotation of the image if necessary

- Invoice scan / table recognition: in the case of a document of the type: payment receipt, table etc., the invoice is scanned.

It is possible to use the classic OCR Engine, or to use a different, slower but more efficient engine for complex text, special characters etc. The result is composed of the extracted text as well as the overlay (layer) with the identified text bounding boxes and the corresponding content. It is also possible to obtain a .pdf file containing the result and the text layer (projection of the text on the image).

Concerning the API, the implementation is very fast and simple. You can choose whether or not to display the bounding box coordinates of each word in the result. These formats are tolerated: PDF, GIF, PNG, JPG, TIF, BMP. All the other options present in the OCR Space online application are also configurable in the API.

Google Cloud Vision

For Google, the application of OCR is confused in the Google Cloud Vision product. So when you try the image recognition service, you get object, label and text detection. Concerning text detection, no parameter can be changed, you can simply read the answer:

Concerning the API, the implementation is slightly more complex. This is mainly due to the many packages that need to be installed. It is also necessary to create a Vision project on the Google Cloud console and then follow the whole process to create a Google authentication key. This key allows the use of the Google Vision API. The result is composed of the text, as well as the coordinates of the bounding boxes corresponding to each word.

Amazon AWS Textract

The console interface simply allows to import an image, and to get back the image with the bounding boxes of the recognized text. As well as the corresponding words shown on the right (on the example, the results are totally wrong). No parameter can be modified by the user, but the user can choose the detection of a form or a table, in order to obtain a better clarity and precision.

Using the Amazon Textract API is tedious. Several steps must first be completed before the API can be properly implemented. First of all, an IAM user will have to be created and assigned Full Access rights for AmazonS3 (storage) and Amazon Textract. Then you need to install the AWS SDK you want to use (in our case Python), create an access key for the previously created IAM user and finally download the .csv file containing the access key and the secret key. After completing these steps, it is possible to implement the API as such:

The result is a json file with the associated bounding box for each word.

Microsoft Azure OCR API

Microsoft Azure Cognitive Services does not offer a platform to try the online OCR solution. However, they do offer an API to use the OCR service. This involves creating a project in Cognitive Services in order to retrieve an API key. Then the implementation is relatively fast:

Thanks to this Python code, it is possible to display as a result each word and its bounding box, as well as the processed image:

What are the OCR Use Cases?

To make this phase much easier and faster, we used the Eden AI solution to use APIs from different vendors. Eden AI allows us to access OCR solutions (among others) from different vendors with the same code, the same API and output a .json response in the same format.

You only need to develop a code to call the Eden AI for the 100 images, then retrieve the text in the result, and those for the 4 available vendors: Google, AWS, Microsoft and OCR Space. It is even possible to run all 4 APIs in a single Eden AI API call. This avoids having to implement a different method to generate the calls for the 100 images, and avoids having to implement for each provider an extraction method for the results (which will depend on the form of each response).

Use case 1: Images

We first test Eden AI API on random images. The database contains about a hundred text images taken in various contexts.

The images come from very different sources, and are in a .jpg format, in small size (images in datasets are very often in small size to limit the size of the dataset). About a hundred images have been randomly selected from this dataset. The python script allows to check if the response of each API corresponds to the label (text) assigned to each image.

An important finding of this database is that Microsoft’s performance, and to a lesser extent that of OCR Space, is distorted by the fact that the Microsoft API did not support most images because of their small size. The OCR Space API supported the images, but for some of them, the API did not detect any text.

However, this issue does not affect the Google OCR and AWS Textract APIs which fully support this image format.

Please note that the results represent the percentage of images whose result is accurate, a prediction close to the real text without being accurate will be considered as a bad prediction.

We have finally for this dataset of various images this ranking:

Google > AWS > OCR Space > Microsoft

Use case 2: Cheque analysis

The objective for this second use case is to use a database of 100 cheques, in order to test the performance of 4 providers on this use case. We have set 4 text elements to be recognized: last name, first name, amount of the cheque and the name of the bank.

The results represent the percentage of “Last Name”, “First Name”, “Amount” and “Bank” perfectly predicted and recognized. A difference in spelling here qualifies the prediction as a failure. The results from different vendors can give us many indicators about the strengths and weaknesses of each API, without considering this as a universal conclusion: each use case may present different results. It is therefore imperative to perform these tests on each specific data set.

Nevertheless, we can see that Google largely displays the best results for the detection of the first and last name on the check and therefore: the handwritten text. However, it is the Amazon solution that displays the best performance for the recognition of the amount: handwritten figures.

Finally, we observe that Google and OCR Space have the best recognition rates of the bank’s name. Thus, we can deduce that potentially OCR Space is more adapted to digital text than handwritten.

Overall, on this use case, we can define the ranking as such:

Google > AWS > OCR Space > Microsoft

Pricing

Concerning the costs of the APIs, they are defined according to thresholds with degressive prices:

Prices are here displayed in dollars, and for a picture (and therefore for pdf: 1 page). We can see that in the majority of uses, prices are very similar for all suppliers, with a plateau at 5M calls, beyond which prices fall by more than 60%.

Please note that the prices displayed in this table may have changed according to the providers as of the day of writing of this article.

This study has shown a trend where Google’s OCR API shows better performances. However, we found that Google did not have the best results (AWS) for predicting the amounts written on cheques.

Why choose Eden AI?

For each use case, it is optimal to benchmark the different OCR solutions in order to choose the most suitable solution. As observed, many parameters impact the performance of the different solutions: image quality, image format, handwritten / digital, nature of the characters (letters, numbers, language). It is therefore impossible to choose the most suitable API provider before testing. This is how Eden AI has been very helpful. It allows, simply by having an Eden AI account and API key, to test the OCR APIs of four providers on our specific data.

Moreover, Eden AI then allows us to use the selected solution from the Eden AI API: just by having an account and an Eden AI API key, we can access a very large choice of AI APIs from various vendors (including OCR APIs). This avoids over-dependence on a single provider or even the ability to use results from multiple vendors at the same time.

You are a solution provider and want to integrate Eden AI, contact us at: contact@edenai.co

.jpg)

.avif)

.avif)