Summarize this article with:

Here is our selection of the best Language Detection APIs to help you choose and access the right engine according to your data.

What is Language Detection?

What does Language Detection do?

Language detection automatically detexts the language(s) present in a document based on the content of the document. By using a language detection engine, you can obtain the most likely language for a piece of input text, or a set of possible language candidates with their associated probabilities.

.svg)

A brief history of Language Detection methods

Language detection predates computational methods – the earliest interest in the area was motivated by the needs of translators, and simple manual methods were developed to quickly identify documents in specific languages.

The earliest known work to describe a functional Language detection program for text is by Mustonen in 1965, who used multiple discriminant analysis to teach a computer how to distinguish between English, Swedish and Finnish.

In the early 1970s, Nakamura considered the problem of automatic Language detection. His language identifier was able to distinguish between 25 languages written with the Latin alphabet. As features, the method used the occurrence rates of characters and words in each language.

The highest-cited early work on automatic language detection is Cavnar and Trenkle in 1994. Cavnar and Trenkle method builds up per-document and per-language profiles, and classifies a document according to which language profile it is most similar to, using a rank-order similarity metric.

Top 10 Language Detection APIs:

1. AWS - Available on Eden AI

Amazon Comprehend uses natural language processing (NLP) to extract insights about the content of documents. Amazon Comprehend processes any text file in UTF-8 format, and semi-structured documents, like PDF and Word documents. It develops insights by recognizing the entities, key phrases, language, sentiments, and other common elements in a document.

2. Google Cloud - Available on Eden AI

The Cloud Natural Language API provides natural language understanding technologies to developers, including sentiment analysis, entity analysis, entity sentiment analysis, content classification, and syntax analysis. This API is part of the larger Cloud Machine Learning API family. Each API call also detects and returns the language, if a language is not specified by the caller in the initial request.

3. IBM Watson® - Available on Eden AI

IBM offers a Language Identification (LID) which uses a combination of algorithms and models to identify the language of text, such as character n-grams, machine learning, and neural networks. By training on a vast dataset of labeled text samples in different languages, this service can detect over 400 languages, and can be applied to identify the language of text, tweets, news articles, and other types of text. It can also detect the parts of the text where the language changes, down to the word level.

4. Intellexer

Intellexer is a linguistic platform which incorporates powerful linguistic tools for analyzing text in natural language. Intellexer Language Recognizer combines statistic and linguistic technologies in order to obtain the highest recognition results. Their language detection algorithm is based on a strong mathematical model of vector spacing algorithm. It creates a multidimensional space of vectors scanning document contests and uses N-grams notion for calculating their frequencies.

5. Microsoft Azure - Available on Eden AI

The Text Analytics API is a cloud-based service that provides advanced natural language processing over raw text, and includes four main functions: sentiment analysis, key phrase extraction, named entity recognition, and language detection.

6. ModernMT - Available on Eden AI

ModernMT is a neural machine translation technology developed by SDL. It uses advanced machine learning techniques to automatically detect the source language of a text and translate it into the target language. The process of language detection is done using neural networks that are trained on a large dataset of text in various languages.

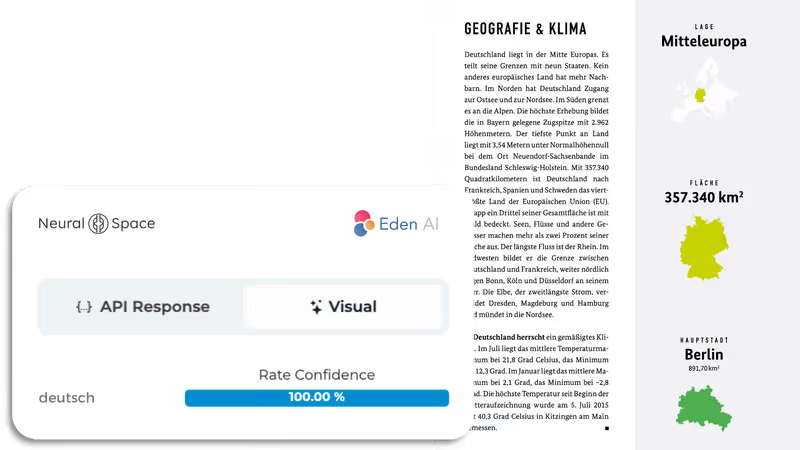

7. Neural Space - Available on Eden AI

NeuralSpace is a Software as a Service (SaaS) platform which offers developers a no-code web interface and a suite of APIs for text and voice Natural Language Processing (NLP) tasks that you can use without having any Machine Learning (ML) or Data Science knowledge. Along with some of the common languages like English, German, French, etc., the platform supports various languages spoken across India, South East Asia, Africa, the Middle East, Scandinavia and Eastern Europe. Alongside Language Detection, NeuralSpace specialize in Translation, Entity Recognition and Speech-to-Text amongst others.

8. OneAI - Available on Eden AI

One AI provides a Language Detection solution that uses advanced language analytics and natural language processing techniques to automatically determine the language of a given text or speech. One AI also provides several multilingual capabilities including processing, transcription, analytics, and comprehension, which can be integrated into various products such as podcast platforms, CRM, content publishing tools, and so forth.

9. OpenAI - Available on Eden AI

Although OpenAI doesn’t provide a specific Language Detection feature, the famous GPT-3 models developed by OpenAI can be used for language detection, as it has been used for various NLP tasks including language identification, machine translation, text summarization, and more. Additionally, the models are general-purpose and can be fine-tuned for specific tasks including language detection.

10. spaCy

spaCy is an open-source software library for advanced natural language processing, written in the programming languages Python and Cython. spaCy comes with pretrained pipelines and currently supports tokenization and training for 60+ languages. It features state-of-the-art speed and neural network models for tagging, parsing, named entity recognition, text classification and more, multitask learning with pretrained transformers like BERT, as well as a production-ready training system and easy model packaging, deployment and workflow management. spaCy is commercial open-source software, released under the MIT license.

Some Language Detection API use cases

You can use Language Detection in numerous fields. Here are some examples of common use cases:

- Content Management: sort and organize documents based on their language.

- Computer-assisted Translation (CAT): identify the source language of a text, so that the appropriate machine translation model can be used.

- Customer Service: route customer inquiries to agents who speak the appropriate language.

- Cyber Security: detect phishing, spam, or other malicious emails and messages by identifying the language used in the content.

- E-commerce: display a website or mobile app in the appropriate language for a user based on their location or preferred language.

- Language Learning: identify the language of a text and match it with the appropriate course materials for the student.

- Natural Language Processing (NLP): preprocess text data by identifying the language of a document before further processing, such as sentiment analysis or machine translation.

- Search Engine Optimization (SEO): target specific languages in search engine results.

- Social Media Monitoring : identify the language of posts and comments on social media platforms, so that relevant content can be prioritized.

- Speech Recognition: identify the language of a spoken phrase, and use the appropriate speech recognition model.

Why choose Eden AI to manage your APIs

Companies and developers from a wide range of industries (Social Media, Retail, Health, Finances, Law, etc.) use Eden AI’s unique API to easily integrate Language Detection tasks in their cloud-based applications, without having to build their own solutions.

Eden AI offers multiple AI APIs on its platform amongst several technologies: Text-to-Speech, Language Detection, Sentiment analysis API, Summarization, Question Answering, Data Anonymization, Speech recognition, and so forth.

We want our users to have access to multiple Language Detection engines and manage them in one place so they can reach high performance, optimize cost and cover all their needs. There are many reasons for using multiple APIs:

Fallback provider is the ABCs

You need to set up a provider API that is requested if and only if the main Language Detection API does not perform well (or is down). You can use confidence score returned or other methods to check provider accuracy.

Performance optimization.

After the testing phase, you will be able to build a mapping of providers performance based on the criteria you have chosen (languages, fields, etc.). Each data that you need to process will then be sent to the best Language Detection API.

Cost - Performance ratio optimization.

You can choose the cheapest Language Detection provider that performs well for your data.

Combine multiple AI APIs.

This approach is required if you look for extremely high accuracy. The combination leads to higher costs but allows your AI service to be safe and accurate because Language Detection APIs will validate and invalidate each other for each piece of data.

How Eden AI can help you?

Eden AI has been made for multiple AI APIs use. Eden AI is the future of AI usage in companies. Eden AI allows you to call multiple AI APIs.

.gif)

- Centralized and fully monitored billing on Eden AI for all Language Detection APIs

- Unified API for all providers: simple and standard to use, quick switch between providers, access to the specific features of each provider

- Standardized response format: the JSON output format is the same for all suppliers thanks to Eden AI's standardization work. The response elements are also standardized thanks to Eden AI's powerful matching algorithms.

- The best Artificial Intelligence APIs in the market are available: big cloud providers (Google, AWS, Microsoft, and more specialized engines)

- Data protection: Eden AI will not store or use any data. Possibility to filter to use only GDPR engines.

You can see Eden AI documentation here.

Next step in your project

The Eden AI team can help you with your Language Detection integration project. This can be done by :

- Organizing a product demo and a discussion to better understand your needs. You can book a time slot here: Contact

- By testing the public version of Eden AI for free: however, not all providers are available on this version. Some are only available on the Enterprise version.

- By benefiting from the support and advice of a team of experts to find the optimal combination of providers according to the specifics of your needs

- Having the possibility to integrate on a third-party platform: we can quickly develop connectors

.jpg)