Summarize this article with:

What is an Image Moderation API?

An Image Moderation API is a tool that uses artificial intelligence to automatically analyze images and detect inappropriate, unsafe, or unwanted content, such as nudity, violence, hate symbols, or illegal activities, based on predefined rules or machine learning models.

Instead of reviewing images manually, developers can integrate an image moderation API into their applications to scan, classify, and filter images in real time. The API typically returns structured results (like labels, confidence scores, or risk levels), allowing platforms to decide whether to block, flag, blur, or approve the content.

Image Moderation API Use Cases

The Image Moderation API predicts whether an image contains potentially explicit content based on a probability score between 0 and 1. It also returns a determination of whether the image meets the conditions (true or false).

Image moderation APIs are widely used in social media platforms, marketplaces, dating apps, and content-sharing services to enforce community guidelines, protect users, and comply with legal requirements.

Detecting Nudity/Pornography

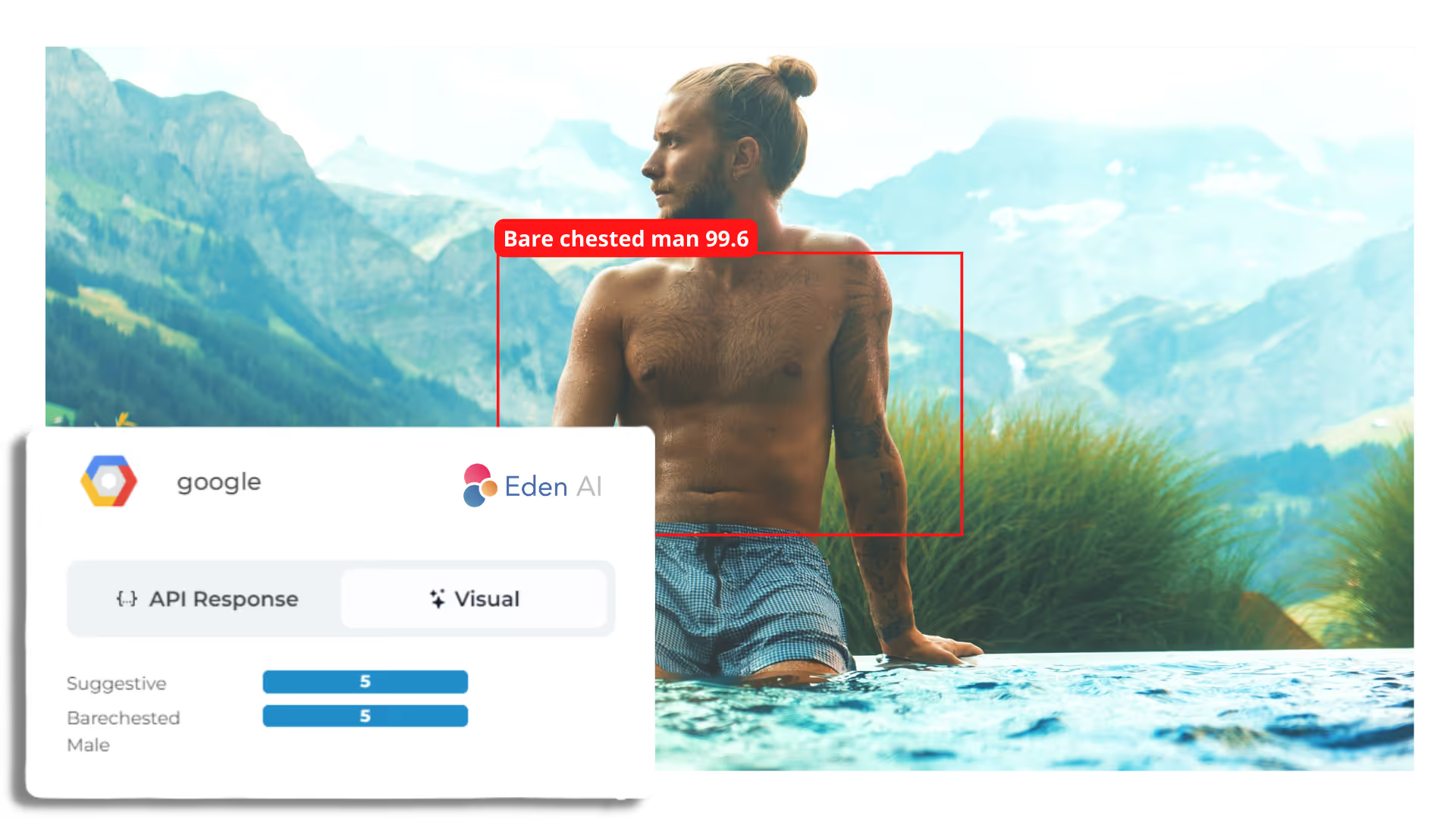

Image Moderation APIs automatically detect several degrees of nudity: from partial nudity (bikini, underwear, cleavage, bare chest), to full nudity (naked bodies) to explicit nudity (suggestive poses, sexual content), it attributes percentages of confidence to your images and videos.

Detecting Profanity

Image Moderation Tools detects offensive gestures, signs, and texts in images considered inappropriate: middle fingers, far-right flags, bad words, hateful language, etc. It helps communities on social platforms moderate user-generated content, keep it safe from extremist content, harassment, and misinformation.

Detecting Misdemeanor/Crime

An Image Moderation API can moderate user-generated images or videos to remove or blur any gun-related content (along with other types of weapons) or displays of alcohol or drugs.

Detecting Violence

Image Moderation APIs also help detect graphic violence and gore such as blood, wounds, self-harm, human skulls, etc.

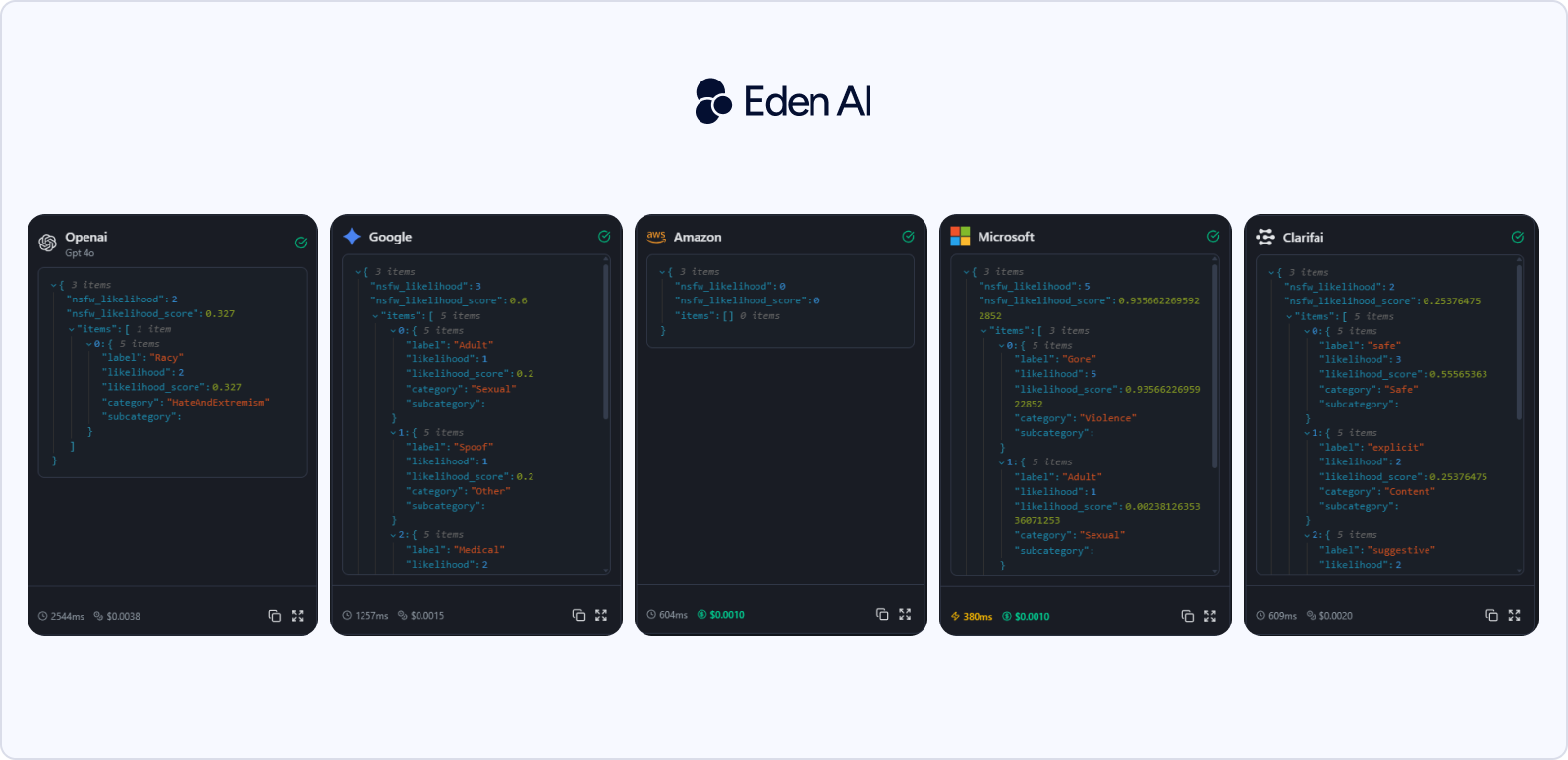

How We Chose Best Image Moderation APIs

We first evaluated image moderation APIs through tests on Eden AI, comparing latency, cost, and output quality, including accuracy and data depth. Then we selected the best image moderation APIs by analyzing how they perform in realistic scenarios, not just based on documentation. Each API was evaluated across essential factors such as accuracy of detection, false positives/negatives, response time, and cost efficiency at scale.

We also assessed developer experience, API consistency, and flexibility, including the ability to handle edge cases like memes, text-in-image content, or borderline explicit images. This methodology allows us to highlight the APIs that deliver reliable results in production.

Top 10 Image Moderation APIs in 2026 (Short Comparison)

We give you below a short comparison of the best 10 image moderation API in 2026 according to their best use cases, moderation coverage, multi-modal and capability of customisation.

Top Image Moderation APIs by Use Cases

Teams should choose the best image moderation API according to your type of enterprise and use case. We give you a short list of which image moderation API should choose if you are:

- Big enterprise / GenAI governance: Azure, Alice, AWS.

- Simple baseline moderation: Google SafeSearch, OpenAI Moderation API.

- Specialist moderation API: Sightengine, Hive, PicPurify.

- Broader visual AI platform with moderation included: Imagga, Clarifai.

Top 10 Image Moderation APIs in 2026 (Updated)

The best image moderation APIs in 2026 are Azure AI Content Safety, Google Cloud Vision SafeSearch, AWS Rekognition, Hive Moderation, OpenAI Moderation API, Alice, Sightengine, Imagga, Clarifai, PicPurify, Azure AI Content Safety, Google Cloud Vision, SafeSearch, AWS Rekognition, Hive Moderation, OpenAI Moderation API, Alice, Sightengine, Imagga, Clarifai, and PicPurify.

Azure AI Content Safety

Azure is one of the best Image Moderation APIs in 2026 for its strengths in text, images, and mixed media, with four monitored harm categories: hate, sexual, violence, self-harm, plus configurable severity thresholds and support for custom categories

Pros:

- Automated filtering

- enterprise-grade protection for offensive content across text, image, and video-like workflows

Cons:

- Footprint is still relatively small

- Enterprise complexity and cost sensitivity

Best For: Large app, marketplace, education product, enterprise copilot, or internal AI assistant and want moderation plus governance in the same environment. It is especially logical if your stack already lives in Azure / Microsoft Foundry / Azure OpenAI.

Pricing: Pay-as-you-go, billed by the number of text records and number of images analyzed.

Google Cloud Vision SafeSearch

Google SafeSearch is the most lightweight image moderation API in 2026. It detects five likelihood-based categories: adult, spoof, medical, violence, racy.

Pros:

- Ease of use, good accuracy

- Smooth integration with other Google services

Cons: Limited customization

Best For: Teams need a fast, reliable baseline for image uploads in a consumer app, marketplace, media site, forum, or internal tool, especially if your team already uses GCP and does not need complex policy logic.

Pricing: free for the first 1,000 units/month, then $1.50 per 1,000 units from 1,001 to 5,000,000, and $0.60 per 1,000 units above that.

AWS Rekognition

AWS Rekognition is the strongest API for high-scale production moderation, especially when you need both images and video. It supports synchronous image moderation and asynchronous video moderation, and AWS also offers Custom Moderation plus Amazon Augmented AI for human review.

Pros:

- Support both both images and video moderation

- Ease of integration, high accuracy

Cons: JSON output can be hard to interpret

Best For: Teams run a UGC platform, video platform, marketplace, social product, or moderation pipeline at AWS scale, especially if you expect to need custom adapters, video moderation, or human review workflows later.

Pricing: a free tier for new customers, including 1,000 images/month for Rekognition Image during the free tier period and 60 free minutes/month of video analysis.

Hive Moderation

Hive is a specialist moderation platform that supports visual, text, audio, GIF, video, and live-stream moderation.

Pros:

- Specialized moderation coverage

- Broad multi-format moderation: +25 model classes

Cons: Pricing is custom: more sales-led procurement and less self-serve developer testing

Best For: Social platform, dating app, creator platform, livestreaming product, or trust-and-safety-heavy marketplace and you need multi-format coverage from day one.

Pricing: Custom pricing.

OpenAI Moderation API

OpenAI Moderation API is a very lightweight moderation layer for text and images with minimal extra integration work. The official moderation guide states the endpoint supports text and images and recommends the omni-moderation-latest model for new apps.

Pros:

- Fast integration

- Scalability

Cons:

- Documentation gaps

- Sometimes need to build their own guardrail logic around the API

Best For: Company already uses OpenAI and wants a cheap or free first safety layer for image uploads, profile photos, generated images, or mixed text+image flows, without buying a separate trust-and-safety stack.

Pricing: Free to use.

Alice

Alice is an AI security, safety, and trust platform with strong emphasis on adversarial intelligence, guardrails, continuous testing, and production monitoring for GenAI and platform safety.

Pros:

- Safe content control

- Ease of releasing content safely

- Operational usefulness

Cons: Too heavy for teams that just need simple NSFW filtering

Best For: Large platform, a regulated enterprise, or you operate AI assistants / agents where safety, abuse prevention, compliance, and adversarial testing matter as much as moderation itself.

Pricing: Custom pricing.

Sightengine

Sightengine is one of the best developer-first specialist moderation APIs in 2026. It supports a large number of moderation classes, including nudity, hate, violence, drugs, weapons, self-harm, commercial text, children, OCR/QR moderation, and more.

Pros:

- 110+ to 120+ moderation classes

- Covers images, video, and live-stream-related workflows

- Quick to set up, fairly intuitive after initial onboarding

Cons:

- Coverage is not very deep

- Product can require more manual policy decisions on the customer side

Best For: Dating apps, communities, social tools, creator platforms, ad platforms, and marketplaces who want a dedicated moderation API with more category depth, without moving all the way to a large enterprise platform.

Pricing: Starter $29/month for 10,000 operations plus $0.002 per extra op; Pro $99/month for 40,000 operations plus $0.002 per extra op.

Imagga

Imagga combines image/video recognition, tagging, search, OCR, and content moderation, and also offers an AI+human moderation platform.

Pros:

- simple and effective to implement

- strong support from the technical team.

Best For: Company needs moderation + tagging + OCR + search in the same product stack, especially in DAM, media, commerce, or photo-heavy applications.

Pricing: Free $0/month with 100 API requests, Indie $79/month with 70,000 API requests, Pro $349/month with 300,000 API requests.

Clarifai

Clarifai is the most flexible image moderation API which supports custom CV pipelines, model orchestration, or hybrid deployments.

Pros: Fast and accurate image/video recognition

Cons:

- Pricing can feel expensive for small teams

- Documentation can be unclear or incomplete

Best For: Large product teams and AI-heavy companies want custom classifiers, training, workflow composition, private deployment, or more advanced multimodal AI.

Pricing: Pay As You Go or Request-level pricing from $0.0012/request.

PicPurify

PicPurify is the most focused API on image moderation in 2026. It is built around direct checks for categories like porn, nudity, violence, drugs, weapons, hate symbols, obscene gestures.

Pros:

- Speed, cost efficiency, scalability

- Strong operational impact for reducing manual moderation

Best For: Company wants a small, affordable, category-based moderation API for UGC uploads, especially if you care most about explicit content, gore, drugs, or hate-symbol filtering.

Pricing: Free with 2,000 units, Starter $19/month for 10,000 units, Pro $49/month for 30,000 units, Ultra $99/month for 70,000 units, Mega $249/month for 200,000 units, Supra $499/month for 450,000 units.

FAQs - Best Image Moderation APIs

What is an image moderation API?

An image moderation API is a tool that uses AI to automatically analyze images and detect unsafe, inappropriate, or policy-violating content such as nudity, violence, hate symbols, drugs, or graphic material.

It returns structured moderation results, often with categories and confidence scores, so developers can decide whether to block, flag, review, blur, or approve an image.

What is the best free image moderation API?

The best free image moderation API for most teams is OpenAI Moderation API. If you want a free option with a more specialized moderation product and paid upgrade path, Sightengine also offers a free plan.

Which image moderation API supports both image and video moderation?

Amazon Rekognition supports both image and video moderation, including asynchronous video moderation workflows for production-scale pipelines. Sightengine also supports moderation across images, videos, and text, which makes it attractive for companies handling multiple content formats.

Which image moderation API is best for startups?

Sightengine is often one of the best image moderation API for startups because it is a specialist moderation API with a free plan, transparent pricing starting at $29/month, and support for image, video, and text moderation.

Which image moderation API is best for enterprise apps?

Azure AI Content Safety is one of the best image moderation API for enterprise because it is built for moderating text and images, supports configurable sensitivity levels, and is positioned by Microsoft as a safety layer for both user-generated and AI-generated content.

.jpg)

.png)