Groq

Ultra-fast LLM inference for real-time AI applications

What is Groq?

Groq is designed for developers who need extremely fast AI inference at scale. Its custom LPU hardware enables significantly lower latency compared to traditional GPU solutions, making it ideal for real-time AI applications.

With support for modern open-source LLMs, Groq excels in tasks such as conversational AI, text generation, and live streaming responses. It is particularly well-suited for products where speed and responsiveness are critical.

They are using Groq

Value delivered

Save time

Integrate once and access hundreds of models without managing multiple APIs.

Model updates, provider changes, and new releases are handled transparently.

Reduce costs

Use the most efficient model for each need. Avoid vendor lock-in and adapt quickly to pricing or performance changes.

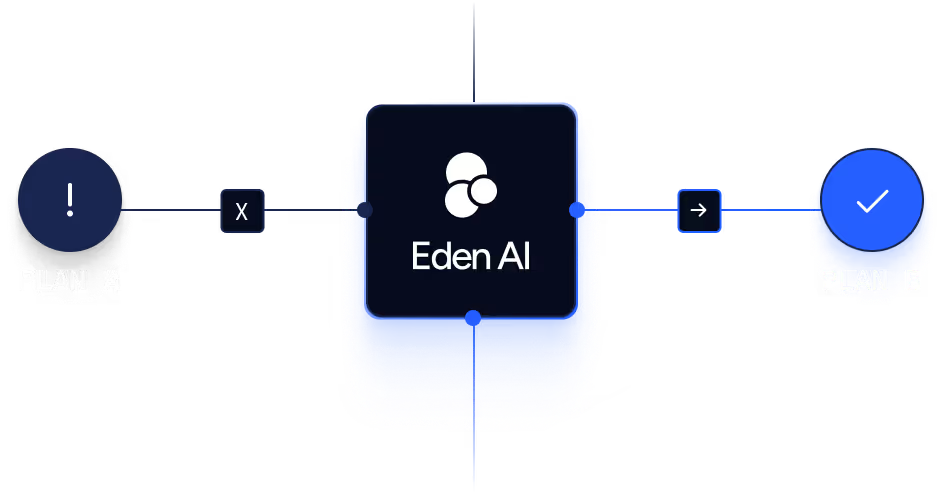

Reduce risk

Built-in fallback mechanisms protect applications from model outages. Routing flexibility allows rapid adaptation to evolving technologies and providers.

Start building with Eden AI

A single interface to integrate the best AI technologies into your products.