Summarize this article with:

In this benchmark created by our CTO and AI engineer Samy Melaine, you will learn about the current state of OpenAI's GPT-3 language model compared to other language models in the market. Eden AI provides an easy and developer-friendly API that allows you to perform many AI technologies .

What is OpenAI's GPT-3

OpenAI’s main objective is to create Artificial General Intelligence : “highly autonomous systems that outperform humans at most economically valuable work”. As part of this effort, they have been working on combining text, image, and speech models, and have achieved a significant milestone with the release of GPT-3. The question addressed in this article is whether GPT-3 can achieve state-of-the-art performance on any language task when compared to specialized models.

Open AI is challenging these companies (especially NLP models) with their GPT3 model. Let’s ask ChatGPT (GPT-3 optimized for dialogue) what GPT-3 is ?

Other specialized AI Models

There are a lot of AI companies training specialized models for specific tasks and providing access to them through APIs. These include large tech companies such as Google, Amazon, Microsoft, and IBM, as well as smaller companies that focus on specific tasks, such as DeepL for translation, Deepgram for speech, and Clarifia for vision.

Large Language Models like GPT-3 should be able to perform well on a wide range of natural language processing tasks without the need for fine-tuning, a phenomenon known as zero shot learning. Let's verify that!

Benchmark between GPT-3 vs Other models

To test the ability of GPT-3 to perform zero shot learning, we will compare it to state-of-the-art proprietary models from various companies on four tasks: keyword extraction, sentiment analysis, language detection, and translation. We've done that using a single API : Eden AI. Code snippets will be provided for each task so that you can reproduce the predictions yourself on your own data.

There is also an Open-source version of EdenAI that you can ⭐find on GitHub⭐ as a Python module !

1. Language Detection comparision

Language detection is simply the task of returning in what language a text is written.

Dataset

We used an interesting dataset from Hugging Face with 20 languages : arabic (ar), bulgarian (bg), german (de), modern greek (el), english (en), spanish (es), french (fr), hindi (hi), italian (it), japanese (ja), dutch (nl), polish (pl), portuguese (pt), russian (ru), swahili (sw), thai (th), turkish (tr), urdu (ur), vietnamese (vi), and chinese (zh).

Evaluation

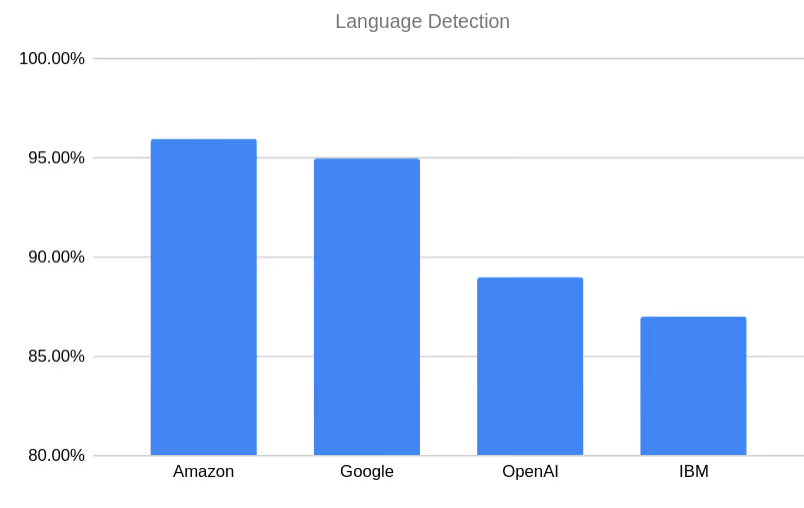

We compared the performance of OpenAI to that of Google, Amazon, and IBM on a set of several hundred examples, using accuracy as the evaluation metric :

Results

The results are shown below with OpenAI ranking third out of the four AI providers we chose.

- Amazon 96%

- Google 95%

- OpenAI 89%

- IBM 87%

2. Sentiment Analysis comparision

This task is about understanding the sentiment of the writer when writing a specific piece of text. It can be Positive, Negative or Neutral.

Dataset

Most of the datasets we've found did not include a “neutral” sentiment except for the Twitter Sentiment Analysis dataset from Kaggle.

Evaluation

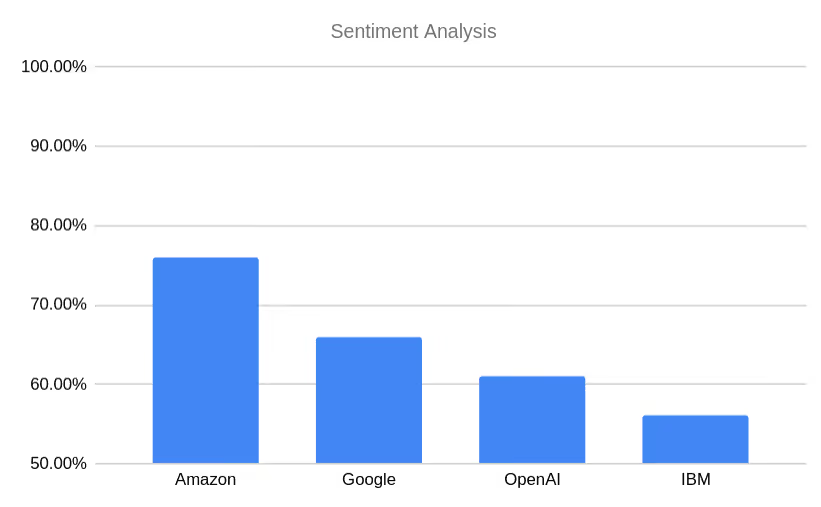

We compared Open AI to Google’s, Amazon’s and IBM’s APIs using the Accuracy metric :

.avif)

Results

Once again, OpenAI gets the 3rd place :

- Amazon 76%

- Google 66%

- Openai 61%

- IBM 56%

3. Keyword Extraction comparision

Keyword or Keyphrase Extraction is about being able to extract the words or phrases that most represent a given text.

Dataset

We selected our datasets from the public GitHub repository AutomaticKeyphraseExtraction. Most of the datasets listed there were too long for the 4k token limit of OpenAI so we had to go with the Hulth2003 abstracts dataset.

Since the different providers are trained to return keywords and keyphrases present in the original text, we did some cleaning to remove all keywords that were not present in the abstracts. We ended up with 470 abstracts.

Evaluation

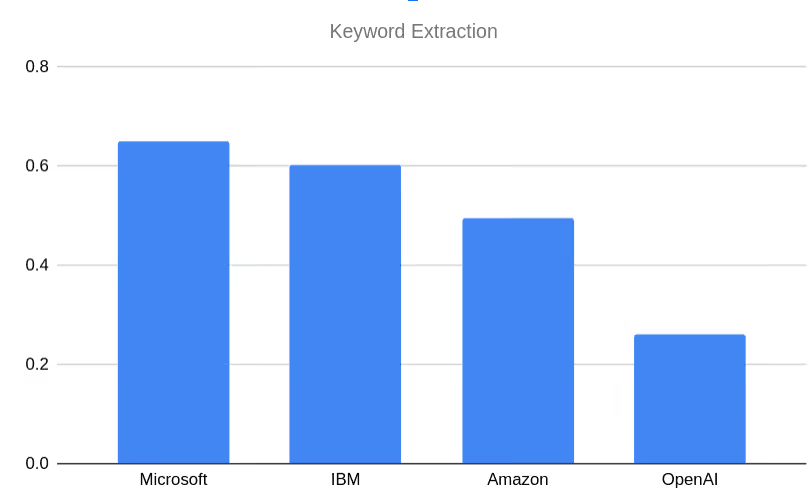

We compared Open AI to Microsoft, Amazon and IBM and we measured their performances using the average precision metric :

.avif)

Results

This time, OpenAI's GPT-3 was ranked last :

1. Microsoft 0.6513312046679187

3. IBM 0.6022276518997

2. Amazon 0.4954784007523

4. OpenAI 0.2598775421

4. Translation comparision

Automatic translation is the process of converting a text written in language A into language B.

Dataset

For our test dataset, we selected 500 examples of translations between various Latin language pairs (German-French, English-French, French-Italian, German-Spanish, German-Swedish) from the Tatoeba Translation Challenge at the University of Helsinki's Language Technology Research Group.

Evaluation

We compared Open AI to DeepL, ModernMT, NeuralSpace, Amazon and Google. A lot of metrics exist for automatic machine translation evaluation. We chose COMET by Unbabel (wmt21-comet-da) which is based on a machine learning model trained to get state-of-the-art levels of correlation with human judgements (read more on their paper).

.avif)

Results

The scores are not interpretable but are used to rank machine translation models. Here again, OpenAI ranks last in this task.

- DeepL : 0.19001633345126925

- ModernMT : 0.17788391513374424

- Amazon : 0.16483921567053203

- Neuralspace : 0.163133354485786

- Google : 0.16280640903935437

- OpenAI : 0.15934198508564865

When should you consider using OpenAI GPT-3 ?

OpenAI's GPT-3 has demonstrated impressive results in natural language processing tasks, approaching the level of "zero shot" multitasking models without fine-tuning.

However, for specific tasks, GPT-3 may not currently be the best choice as an API due to its lower performance compared to other models and the 4k input token limit which may make it difficult to process longer texts. It is important to carefully evaluate different models and choose the one that is most suitable for a given task or application.

We still do need to closely watch the new models OpenAI is working on. As Sam Altman talked about in an interview, they are And implementing a continuous learning approach which would make their model constantly improving by feeding on the internet. They are also planing on unifying their models to deal with multiple input types which resulting in a single model capable of analyzing any type of data.

Why choosing Eden AI unique API for your project ?

When selecting a pre-trained artificial intelligence model and its API for a specific task or application, it is crucial to carefully evaluate the available options and choose the one that is most appropriate. This involves considering the performance and accuracy of the models and APIs on the relevant tasks, the size and complexity of the dataset, and any constraints on resources such as time, computational power, and budget.

Using Eden AI unique API is quick and easy and can help ensure the success of the project !

Save time and cost

We offer a unified API for all providers: simple and standard to use, with a quick switch that allows you to have access to all the specific features very easily (diarization, timestamps, noise filter, etc.).

Easy to integrate

The JSON output format is the same for all suppliers thanks to Eden AI's standardization work. The response elements are also standardized thanks to Eden AI's powerful matching algorithms. This means for example that diarization would be in the same format for every speech-to-text API call.

Customization

With Eden AI you have the possibility to integrate a third party platform: we can quickly develop connectors. To go further and customize your request with specific parameters, check out our documentation.

.jpg)

.png)

.png)