Summarize this article with:

- As applications rely more on AI APIs - from LLMs to computer vision and speech recognition - stability and performance become key challenges.

- Load balancing ensures that requests are automatically distributed across different providers or models, so your system remains responsive even under heavy load.

- Requests can be automatically balanced based on cost, latency, or model performance.

- By using a unified platform like Eden AI , you can easily distribute requests across providers, monitor performance, and guarantee reliability, while keeping integration simple and efficient.

- How Can You Load Balance Calls to AI and LLM APIs? enables automation, accuracy improvements, and cost reduction across AI-powered applications.

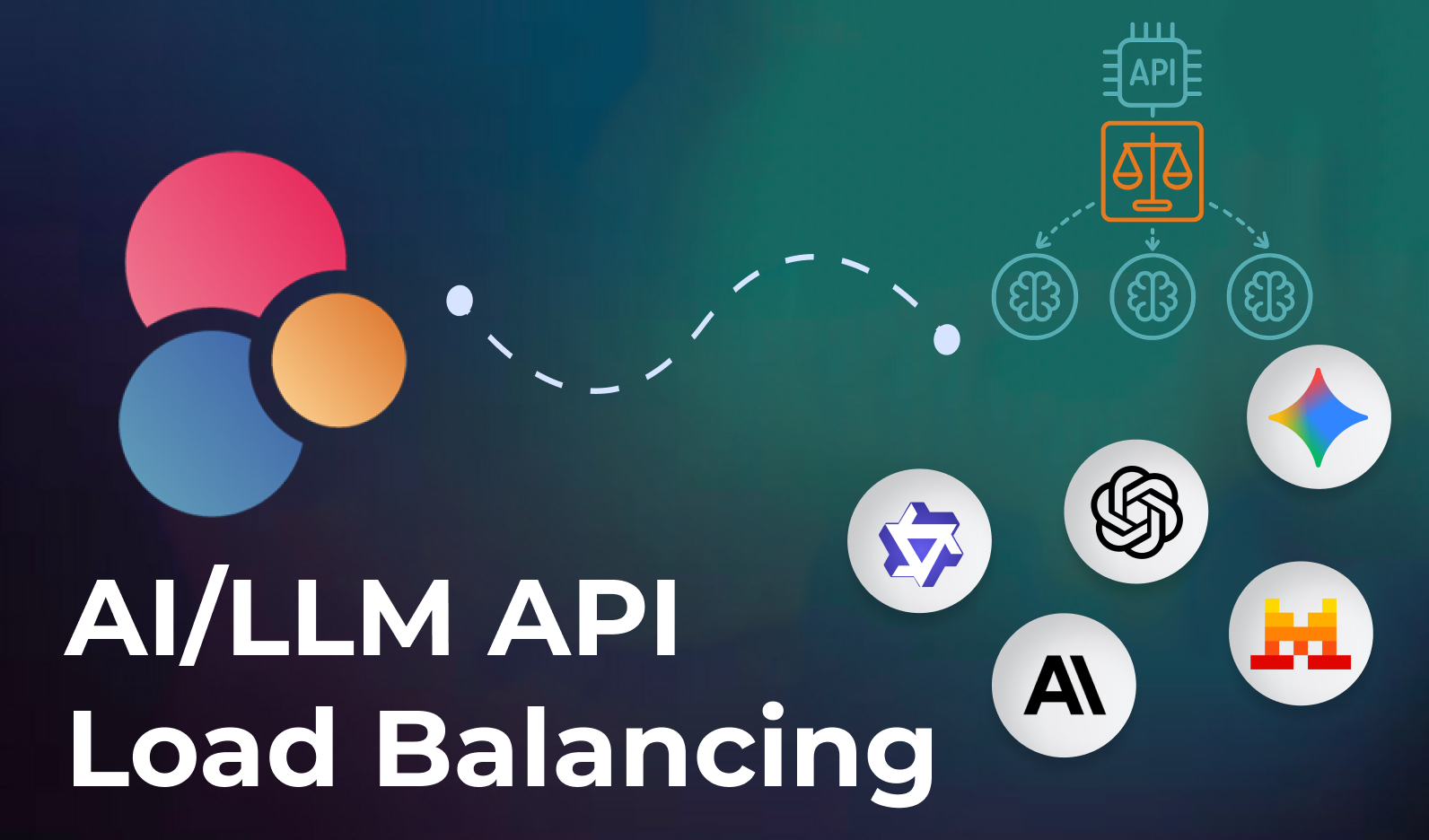

How Can You Load Balance Calls to AI and LLM APIs?

As applications rely more on AI APIs - from LLMs to computer vision and speech recognition - stability and performance become key challenges.

When one provider gets overloaded, or when API rate limits are hit, your service can slow down or fail entirely.

Load balancing ensures that requests are automatically distributed across different providers or models, so your system remains responsive even under heavy load.

Why Load Balancing Is Important for AI APIs

AI and LLM APIs differ from standard web APIs in several ways:

- Variable response times: Each model may respond at different speeds.

- Dynamic availability: Providers sometimes experience temporary slowdowns or outages.

- Rate limits: APIs often cap the number of requests per minute.

- Different pricing: Cost per token or call can vary significantly between providers.

Without load balancing, you risk bottlenecks, timeouts, and inconsistent performance.

How Load Balancing Works for AI APIs

The goal of load balancing is to distribute requests smartly among multiple providers or models.

Here are common strategies:

1. Round Robin

Requests are distributed evenly among available providers.

Example: Call OpenAI → Anthropic → Mistral → repeat.

2. Weighted Distribution

Providers are assigned weights based on performance or cost.

Example: 70% of traffic goes to the cheapest provider, 30% to the fastest.

3. Latency-Based Routing

Requests are routed to the provider currently responding the fastest.

4. Health Checks & Failover

If one provider fails or becomes slow, requests are automatically rerouted to a backup.

5. Dynamic Routing (Smart Load Balancing)

Use real-time metrics (speed, cost, success rate) to choose the best provider for each request.

Example Use Cases

- Chatbots and Assistants: Distribute LLM queries between models to ensure faster response times.

- Document Processing: Use several OCR APIs to handle large batches without overloading a single one.

- Speech Recognition: Split audio transcription workloads across providers depending on language or accuracy.

- Generative AI Apps: Balance text generation or image creation tasks to avoid queue delays and optimize costs.

How Eden AI Simplifies Load Balancing

Normally, implementing load balancing for AI APIs means:

- Coding multiple integrations,

- Building monitoring tools,

- Managing routing logic,

- Handling fallback in case of failure.

With Eden AI:

- You access dozens of AI and LLM providers through a single unified API.

- Requests can be automatically balanced based on cost, latency, or model performance.

- Built-in fallback and rerouting logic prevent downtime.

- A dashboard lets you monitor API usage and performance in real time.

In short: you get smart load balancing out of the box, without managing multiple APIs manually.

Conclusion

As your application scales, relying on a single AI provider becomes risky and costly. Load balancing ensures your system remains fast, stable, and resilient, even under heavy load.

By using a unified platform like Eden AI, you can easily distribute requests across providers, monitor performance, and guarantee reliability, while keeping integration simple and efficient.

Frequently Asked Questions (FAQ)

What is the main topic covered in this article?

This article covers How Can You Load Balance Calls to AI and LLM APIs?, explaining key concepts, use cases, and practical guidance for developers and businesses looking to leverage AI capabilities.

How can Eden AI help with this?

Eden AI provides a unified API platform that gives you access to the best AI providers for this use case, with standardized responses and centralized billing.

Do I need technical expertise to get started?

Not necessarily. Eden AI offers a no-code playground for testing and clear API documentation for developers. Many integrations can be set up in minutes.

What are the most common use cases?

Common applications include automating workflows, enriching data pipelines, building intelligent products, and reducing manual processing time related to How Can You Load Balance Calls to AI and LLM APIs?.

Is Eden AI GDPR compliant?

Yes. Eden AI allows you to filter providers to only use GDPR-compliant engines, and the platform itself does not store or reuse your data.

.jpg)

.png)