Summarize this article with:

- Many products built around AI start with one provider, it’s fast, simple, and easy to manage.

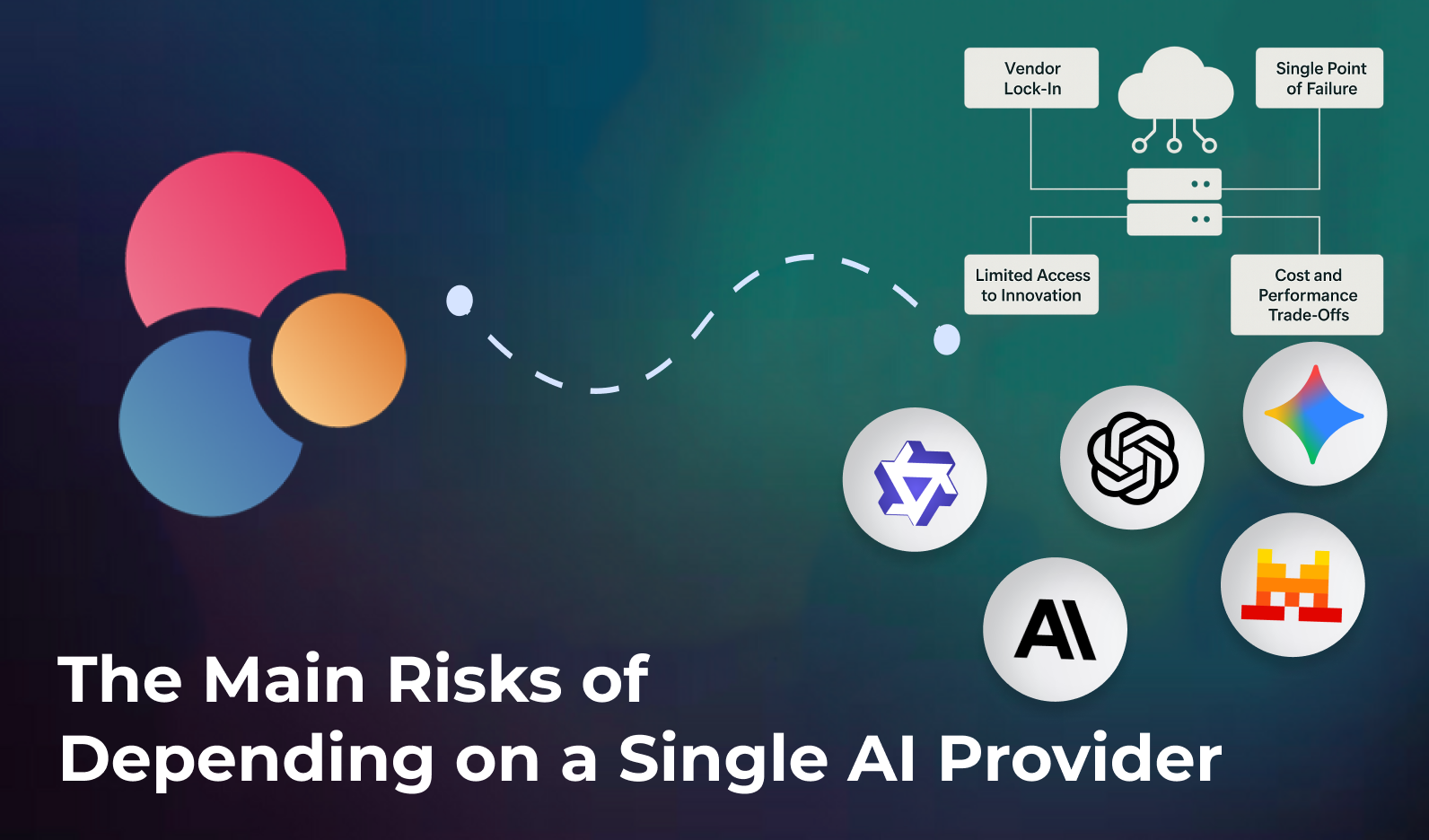

- Vendor lock-in occurs when your architecture depends too heavily on one provider’s SDKs or API structure.

- The AI market moves fast, every month, new models outperform existing ones or offer better pricing.

- Not all models are equal in speed, accuracy, or cost.

- Depending on a single AI provider might simplify your early product phase, but it becomes a liability as you scale, exposing you to downtime, price changes, and missed innovation.

Why Should Your Product Not Rely on a Single AI Provider?

Many products built around AI start with one provider, it’s fast, simple, and easy to manage. But as your system grows, you become tied to that provider’s limits: their pricing, API, and roadmap. According to provider dependency, this overreliance leads to reduced flexibility, higher costs, and potential downtime.

1. Vendor-lock-in risk

Vendor lock-in occurs when your architecture depends too heavily on one provider’s SDKs or API structure. Any API update, price change, or feature removal forces you into expensive rework.

As highlighted in vendor lock-in risks, this dependence reduces agility and makes it hard to adopt better models later on.

By distributing requests across multiple providers from the start, you preserve your ability to evolve, both technically and financially.

2. Single point of failure

If one provider experiences an outage, rate limit, or policy change, your entire product suffers. To build resilience, teams should integrate fallback logic and load-balancing systems that distribute traffic between providers.

The load balancing guide describes how to maintain uptime and consistency while reducing latency through intelligent routing.

3. Limited access to innovation

The AI market moves fast, every month, new models outperform existing ones or offer better pricing. Depending on a single vendor prevents you from adopting these improvements.

As multi-model access shows, a unified API makes it easy to integrate new providers quickly and compare model performance for specific use cases.

4. Cost and performance trade-offs

Not all models are equal in speed, accuracy, or cost. Some providers are cheaper but slower, while others deliver better quality at a higher price.

By applying systematic benchmarking as described in model comparison, you can select the right provider for each task, optimising both cost and performance.

A well-designed multi-provider setup ensures that you always use the best model for the job.

5. How to build a multi-provider architecture

Multi-API management outlines how to structure your infrastructure for flexibility and scalability:

- Abstract the provider layer – create a unified interface to standardise calls and responses.

- Implement routing logic – route requests based on cost, latency, or accuracy thresholds.

- Introduce fallback logic – automatically reroute to another provider in case of failure.

- Monitor usage and spending – track API activity, latency, and cost in real time.

- Benchmark periodically – compare providers and switch when a better model emerges.

How Eden AI helps you build this strategy

Eden AI was designed to eliminate the pain of vendor dependency. It offers a unified API that lets you access, compare, and manage models from multiple providers effortlessly.

Key features include:

- AI Model Comparison – benchmark model quality, latency, and cost across providers.

- Cost Monitoring – visualise and control your API expenses per provider or model.

- API Monitoring – track performance, response times, and errors across all integrations.

- Caching – improve speed and reduce redundant calls by storing frequent responses.

- Multi-API Key Management – manage multiple API keys securely and route traffic intelligently.

These advanced features empower developers to manage a robust, cost-efficient, and resilient AI architecture without reinventing the wheel.

Conclusion

Depending on a single AI provider might simplify your early product phase, but it becomes a liability as you scale, exposing you to downtime, price changes, and missed innovation.

A multi-provider strategy offers flexibility, reliability, and long-term cost optimisation.

With Eden AI’s unified API, model comparison, monitoring tools, and advanced routing features, you can future-proof your product and keep full control of your AI infrastructure.

Frequently Asked Questions (FAQ)

What is Why Should Your Product Not Rely on a Single AI Provider?

Why Should Your Product Not Rely on a Single AI Provider is an AI-powered capability that helps developers and businesses automate workflows, improve decision accuracy, and process data at scale without manual intervention.

How does it work?

The process typically involves sending data (text, image, audio, or document) to an AI model via API, which returns structured results in JSON format. The model handles all the complexity behind the scenes.

What are the main use cases?

Common applications include document processing, content moderation, data extraction, language translation, and building intelligent chatbots or recommendation systems.

How do I get access to multiple providers?

Eden AI aggregates the best providers for this use case under a single API, letting you compare and switch between models without managing multiple accounts or API keys.

Is it suitable for production environments?

Yes. Most AI APIs offer SLAs, rate limits, and enterprise plans suitable for production use. Eden AI adds fallback routing and centralized monitoring to further improve reliability.

.jpg)

.png)