Start Your AI Journey Today

- Access 100+ AI APIs in a single platform.

- Compare and deploy AI models effortlessly.

- Pay-as-you-go with no upfront fees.

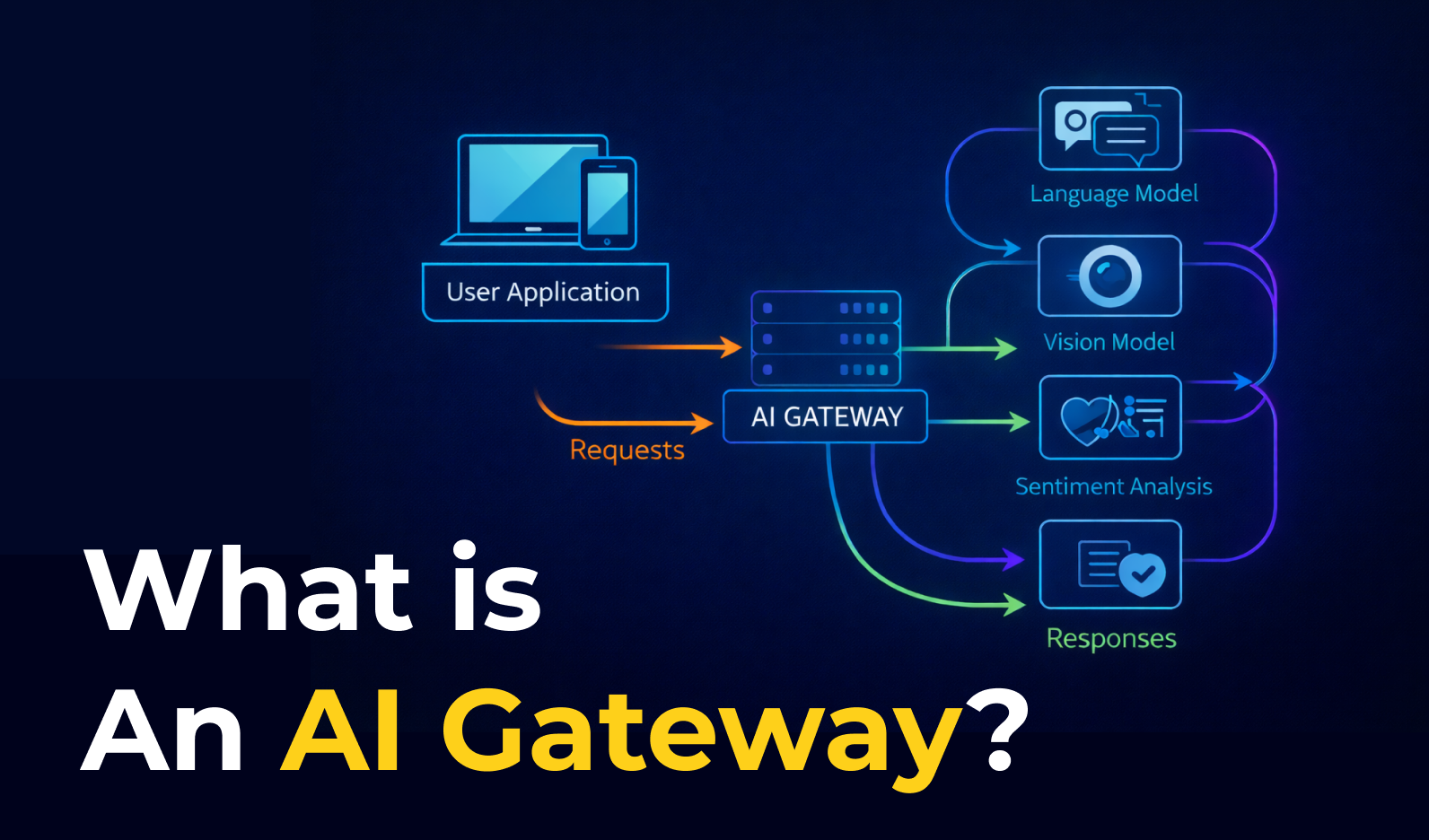

An AI Gateway is a control layer that helps developers connect to multiple AI models through one API while managing routing, security, observability, and cost. In this article, we explain what an AI Gateway is, how it works, its key features, benefits, differences between an AI Gateway and an API Gateway and best use cases for the two gateways.

An AI Gateway is a platform that helps businesses and developers connect, manage, and use multiple AI models through one interface. Instead of integrating separate APIs from providers like OpenAI, Anthropic, or Google, users can access them from a single platform.

For example, instead of connecting individually to OpenAI, Claude, and Gemini, a company can use an AI Gateway to access all three in one place. This becomes even more useful in more advanced use cases. A business might want to use one model to answer customer questions, another to detect sentiment in messages, and another to analyze images sent by users.

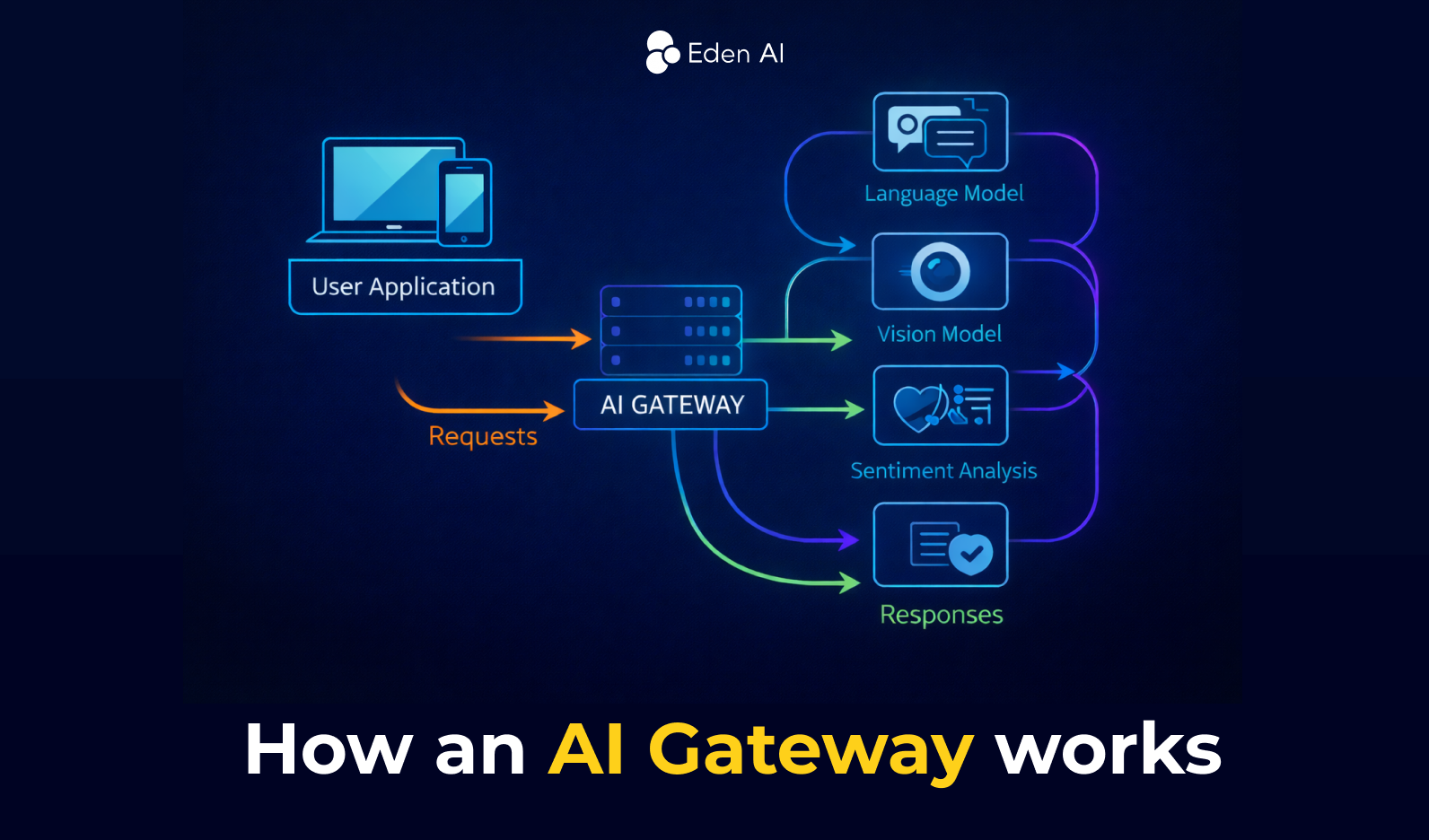

An AI Gateway acts as a layer between your application and the AI models you use. Every request goes through the gateway before reaching the model.

First, when a user sends a request, the AI Gateway receives it. For example, a customer may send feedback to your application that includes both text and an image.

Next, the gateway processes the request and decides where each part should go. It can send the text to a large language model, the image to a vision model, and the same message to a sentiment analysis model. At the same time, it can apply rules such as authentication, security checks, and data filtering.

Finally, once the models return their outputs, the AI Gateway combines the results into one standardized response and sends it back to the application.

An AI Gateway provides a set of core features that help manage how applications access and use AI models. It offers a unified API to connect to multiple providers, routes requests to the appropriate model, enforces security and usage policies, monitors performance and costs, and ensures scalable and reliable AI operations from a centralized control layer.

An AI Gateway exposes one standardized API endpoint for multiple AI models and providers. This unified interface hides differences between provider APIs, making it easier for developers to integrate and switch between models without changing their application code.

The gateway decides which AI model should process each request. It can dynamically route requests based on performance, cost, or use case, while also managing model lifecycle operations such as deployment, versioning, updates, and rollbacks.

AI gateways enforce authentication, authorization, and encryption to protect AI systems. They can filter sensitive data, prevent prompt injection attacks, and apply policies that control who can access specific models or datasets.

The gateway helps manage AI consumption through rate limiting, quotas, and token tracking. This allows teams to monitor usage and prevent excessive costs when using external AI providers.

AI gateways track performance metrics such as latency, errors, token usage, and request volume. Centralized logging and dashboards provide visibility into AI activity, helping teams detect issues quickly and optimize performance.

Before sending requests to models, the gateway can clean, normalize, enrich, or transform data. This includes adding context to prompts, filtering sensitive information, or formatting data so that it works consistently across different models.

AI gateways manage load balancing and scalable model serving, ensuring that AI requests are distributed across available model instances. This enables reliable real-time or batch inference even when workloads increase.

Using an AI Gateway helps teams manage AI models more securely and efficiently by controlling everything from one layer, instead of building complex infrastructure for routing, monitoring, and security.

AI gateways protect applications by enforcing authentication, role-based access control (RBAC), credential management, and data protection policies. They reduce the risk of sensitive data leaks and help defend against threats like prompt injection or malicious API usage.

A centralized gateway makes it easier to manage multiple AI models and providers. Developers can control routing, policies, monitoring, and usage tracking from a single place instead of maintaining separate integrations.

AI gateways automatically balance traffic across model instances, optimize resource usage, and maintain stable performance as AI workloads grow.

Gateways track metrics such as latency, errors, token usage, and model activity. This visibility helps teams detect issues early and optimize costs and performance.

By integrating with CI/CD pipelines and DevOps workflows, AI gateways enable faster experimentation, easier updates, and quicker deployment of AI-powered features.

AI gateways provide unified access to different AI models and providers, allowing teams to test, switch, or combine models quickly when building new AI applications.

An API Gateway is built for traditional application APIs, while an AI Gateway is built for AI workloads and adds features like model routing, token tracking, prompt security, and cost control.

An AI Gateway is the better choice when your application relies on LLMs or other AI models. It adds AI-specific features such as model routing, fallback between providers, token tracking, prompt filtering, data masking, and AI cost monitoring.

Typical cases:

An API Gateway is the right choice when your application mainly connects to backend services, databases, or microservices through traditional APIs. It helps with request routing, authentication, rate limiting, caching, and monitoring.

Typical cases:

Choosing the right AI gateway in 2026 requires evaluating different critical factors further than basic model routing. Here are 10 key criteria that you should take into consideration when comparing different AI Gateway:

While choosing an AI Gateway, teams building production AI systems should prioritize performance, reliability, and observability, while enterprises may care more about governance, compliance, and ecosystem fit. Startups and developers testing multiple models may prioritize provider coverage, flexibility, and ease of integration.

The best AI gateway in 2026 depends on your setup: some platforms are built for edge performance, others for enterprise governance, and others for fast experimentation. Below, we present five of the top AI gateway platforms in 2026 based on their ideal use cases.

An API Gateway manages standard API traffic between clients and backend services, while an AI Gateway is built specifically for AI workloads such as LLM prompts, model responses, embeddings, and AI provider routing.

You should use an AI Gateway when your application relies on AI models in production, especially if you need model routing, fallback between providers, token usage tracking, prompt security, or AI cost control.

An API Gateway is the right choice when you need to manage traditional APIs, microservices, authentication, rate limiting, caching, and backend traffic for web or mobile applications.

Not always, but it can still be useful. Even with one provider, an AI Gateway can improve security, observability, governance, and cost monitoring, especially in production environments.

Not always. An AI Gateway is designed for AI-specific traffic, while an API Gateway manages general application APIs. Many companies use both, with one for backend services and the other for AI model interactions.

You can start building right away. If you have any questions, feel free to chat with us!

Get startedContact sales